As we covered in Part 1, the world of AI chipsets is radically changing, full of innovation, and intrinsically difficult to adapt to because there are so many players, and so many overlapping, conflicting, competitive initiatives.

However, there’s one consistent element.

These chips are hot, and most data centers cannot cool them without a different cooling approach.

That seems strange to many, since for decades, we’ve been cooling servers with chilled air. Except for some niche use cases, it’s been the standard for cooling data center hardware.

Until now. Enter liquid cooling for AI chipsets.

Understanding TDP

Air-cooled data centers have functioned for decades. We adopted air cooling because the original server closets used air cooling. Today, air cooling is a massive business with dozens of manufacturers, using enormous chillers, fans, heat exchangers and evaporative cooling towers to keep servers functioning.

But we’ve reached an inflection point. Today’s chipset and server designers are making servers hotter than ever before and air cooling is struggling to keep up.

Once upon a time, servers had one or two CPUs, and each processor consumed, at most, 200W of power at 100% utilization. Those servers generated, at most, 500W of heat. Today’s servers have CPUs that consume 500W. These servers are also built with GPUs, from NVIDIA, Intel, and others, that consume large amounts of power. What we have is an industry that’s pushing the envelope for heat production, or thermal design power (or TDP) — and that’s forcing data center operators to think, intently, about how to cool these new designs.

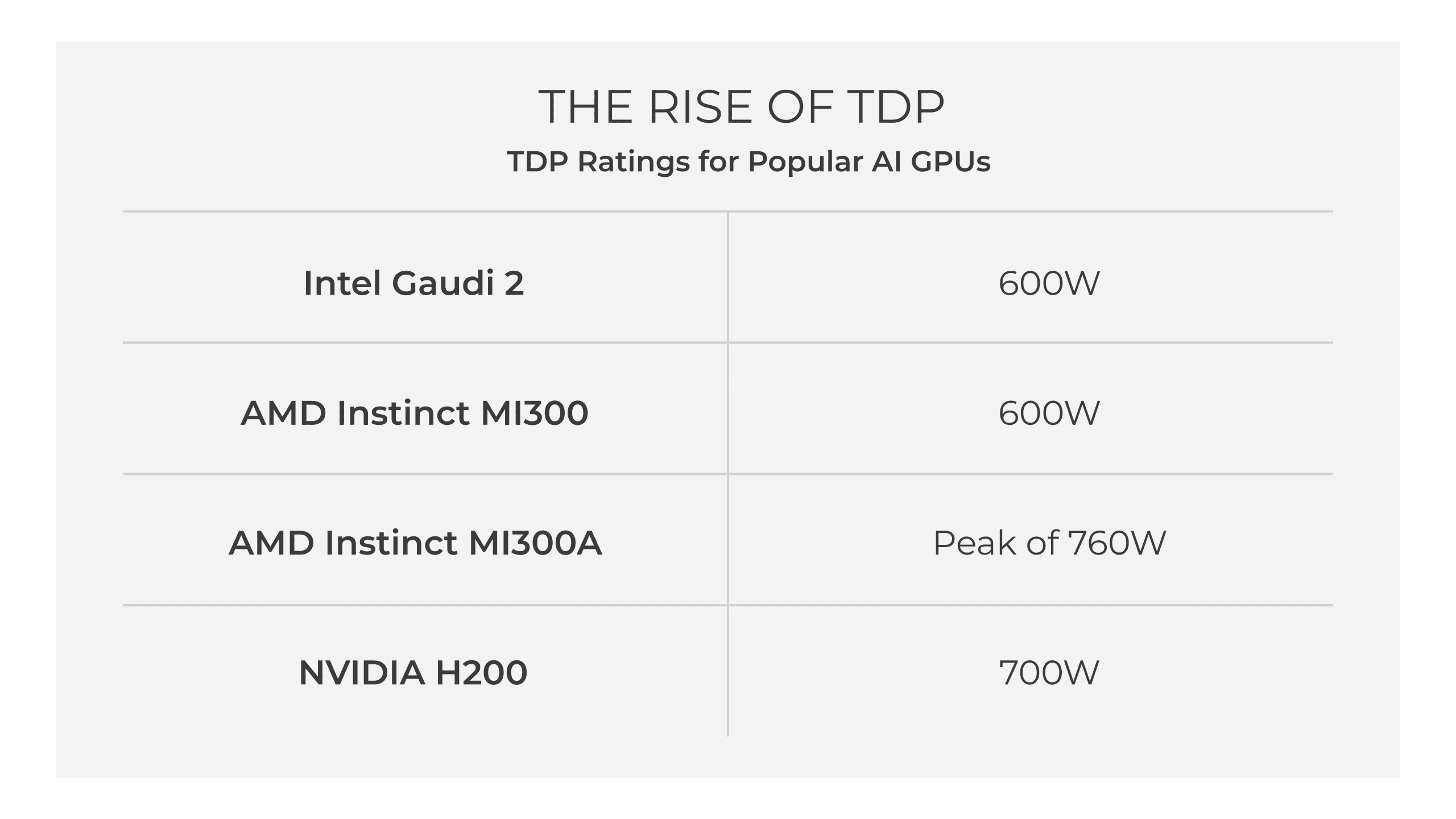

Let’s examine the challenge. Here’s a chart of thermal design power (TDP) specifications for some of the most popular AI GPUs.

So, a server with four AMD Instinct MI300 processors would generate nearly 2.5kW of heat per server, just for the GPUs, and a rack of these servers would generate over 50kW of heat — overlooking the heat generated by the CPUs, the memory, and all the other components.

That’s too much for air cooling to handle. The Uptime Institute tells us that today’s GPUs — and the heat they generate — cannot be supported in most data centers.

And the problem is getting worse. The upcoming NVIDIA B200 will have a TDP of 1200W per processor. Old assumptions about heat generation per rack are up in the air, and the ability to cool these chips is also up in the air.

What we do know is that these chips will require some type of liquid cooling.

The AI Chip Arms Race Leads to Liquid Cooling

Some think of liquid cooling as a niche technology, but for AI, it’s rapidly reaching the mainstream. For years, it’s been the silent hero behind massive supercomputers and HPC clusters handling the world’s most demanding calculations. Even tech giants like Google (TPUs since 2018) and Meta (phased data center integration) are embracing liquid cooling as AI workloads balloon. As hyperscalers push the boundaries of artificial intelligence, their success hinges on the ever-growing importance of liquid cooling technology.

Liquid cooling has been used for hundreds of years in other industrial applications. Since liquids can carry as much as 30x the amount of heat for a given volume, they’re simply a more efficient and effective way to move heat from hot components like AI GPUs. Air-cooled data centers can support around 30kW of heat per rack, while innovators are already announcing that their liquid cooled racks can manage much more. For example, NVIDIA’s new GB200 NVL72 rack scale architecture can cool over 120kW of GPU capacity per rack.

Liquid cooling is the only way for AI to scale. It offers compelling advantages beyond simply supporting the heat generated by these new chips. It’s a must have for efficiency — cutting the power consumption of cooling tech. It allows the processors to be used at their full potential, reducing slowdowns due to high heat. It results in higher performance and contributes to overall processor lifespan.

As we suggested before, there are a number of liquid cooling technologies in the market and little consensus about which technology is the best, though they broadly fall into two categories.

- Immersion cooling

- Direct-to-chip

The hyperscale companies are using a mix of both technologies, depending on what they’re trying to achieve. Let’s take a look at both of these, exploring pros and cons.

Immersion Cooling

Immersion cooling is what it sounds like — the immersion of hot server components in a liquid. Essentially, immersion cooling can use two either single-phase or two-phase liquids:

- Single-phase immersion: The entire server or component is submerged in a non-conductive dielectric liquid. Heat is transferred directly from the server to the liquid, which is then cooled by a separate heat exchanger.

- Two-phase immersion cooling: Similar to single-phase, but the liquid boils directly off the server components, creating vapor bubbles that rise and condense in a heat exchanger, completing the cycle.

Immersion cooling can support higher processor temperatures than air and can help increase data center rack densities. But downsides are a substantial challenge for many organizations.

- Servers need modifications to function submerged, and the entire data center infrastructure requires an overhaul.

- Immersion cooling can invalidate warranties.

- Staff need additional training in order to maintain immersion-cooled environments.

- Immersion cooling sometimes struggles to cope with high TDP processors without increasing liquid flow rates. Increasing flow rates utilizes more energy and increases pump and fluid distribution complexity.

- Two-phase immersion cooling fluids are toxic, and some are being phased out.

To put it simply, immersion cooling is a radical departure from traditional methods that translates to significant upfront costs and complex implementation and maintenance challenges.

Direct-to-Chip Liquid Cooling

Direct-to-chip is also what it sounds like: the movement of a cooling liquid directly to hot components. Rather than immersing an entire server, fluid is pumped directly to a processor, where it absorbs heat, which is pumped away from the server and cooled.

Two forms exist:

- Single-phase cold plates: These are metal plates that sit directly on top of heat-generating components (CPU, GPU) and absorb heat. Coolant flows through channels or jets in the plate, carrying heat away.

- Two-phase evaporative cooling: This method uses microchannels in the cold plate where the coolant evaporates directly from the heat source. The vapor then travels to a condenser where it cools and condenses back into liquid.

When comparing the two, single-phase utilizes a simpler design, comes with lower costs, is scalable and quieter. Two-phase claims an increased heat capacity, but comes with a more complicated design, toxic fluids, higher costs, and limited scalability.

For these reasons, single-phase, direct-to-chip liquid cooling for AI infrastructure is leading the pack in deployments today, and one that hyperscalers and chip manufacturers see as the most easily utilized form of liquid cooling in the market.

Why JetCool?

JetCool’s patented direct liquid cooling (DLC) microconvective technology simplifies cooling for high TDP processors without requiring extensive modifications to servers, racks, or data centers. Unlike traditional microchannel cold plates, JetCool uses an array of microjets to precisely target heat-generating components like CPUs and GPUs, enhancing heat removal efficiency.

Key features include:

- Microjet Impingement Technology: Targets processor hot spots directly, efficiently managing high-density heat fluxes, and eliminating thermal throttling concerns.

- No Thermal Interface Materials (TIMs): JetCool offers two product lines, including SmartLids and SmartSilicon, that eliminate the need for degradable thermal pastes, improving heat transfer.

- Warmer Inlet Coolant Temperatures: Supports up to 60°C inlet coolant temperatures, eliminating water consumption, reducing energy consumption by lessening the burden on CRAH and HVAC systems.

Customer benefits:

- High Capacity Cooling: Single-phase direct liquid cooling (DLC) for high-performance processors over 1,500W per socket, obviating the need for chillers and other cooling infrastructure.

- Advanced Solutions for High-Wattage Processors: Supports 4,000W processors with SmartSilicon technology, which integrates cooling directly into the chip substrate for precise temperature control.

- Efficient GPU Cooling: Effectively cools GPU chipsets in production environments with inlet temperatures up to 60°C, facilitating free cooling almost everywhere globally.

This approach yields significant efficiencies, achieving Power Usage Effectiveness (PUE) as low as 1.02 in environments utilizing NVIDIA GPUs.

Conclusion

It’s an exciting time in the data center industry as generative AI infrastructure expands exponentially, turning a once-mature technology space into something exciting and innovative. JetCool remains at the forefront of solving the challenges around the growth of AI with our direct-to-chip single-phase liquid cooling, which allows organizations to sidestep the challenges of heat rejection without impeding growth.

10 Comments